A client called me last year. Mid-size agency, about 150 people, operating in a heavily regulated industry where subject matter accuracy isn't optional — it's compliance. They'd invested in an AI content generation tool from an early-stage vendor. The pitch was compelling: automate their most time-consuming deliverables, free up their subject matter experts, cut turnaround times in half.

They treated it as an R&D initiative. Fair enough — that's a reasonable way to test something new. But after more than $50,000 paid to the vendor and countless internal team hours spent configuring, prompt-tuning, and reviewing output, the tool delivered content that was too generic for their standards. The vendor had built the product for more general content use cases. It had never been tested against the kind of domain-specific, expert-level accuracy this client needed.

They tried everything. Custom prompts. Fine-tuning sessions with the vendor. Internal review loops. Nothing brought the output quality to a standard their compliance team would accept. The tool sat on the shelf. The budget was gone. The team was demoralized.

This pattern isn't rare. I see it every month. And it almost always starts the same way: a company gets excited about what AI could do, buys a tool, and then tries to make it fit a problem they haven't clearly defined. That's not an AI strategy — that's expensive improvisation.

Here's what I've learned after years of helping Canadian startups and SMBs navigate this: AI works for smaller companies. But only if you start with the business problem, not the technology. And only if your data is ready to support it.

Why SMBs Keep Getting AI Wrong

Three patterns show up in almost every failed AI project I've walked into.

The first is buying tools before defining problems. The opening story is the poster child. A company hears about a shiny AI tool, gets a demo, feels the excitement in the room, and buys it. Then they look for a use case. That's backwards. The tool should be the last decision you make, not the first. I worked with a 60-person logistics company that purchased three different AI tools in 18 months — each one solving a problem nobody had clearly articulated. Total spend: over $40,000 in subscriptions alone, not counting the integration time.

The second is copying what enterprise companies do. What works for a 10,000-person corporation with a dedicated data science team and a $2M AI budget does not scale down to a 30-person operation. Enterprise AI projects assume you have infrastructure, clean data pipelines, and specialized talent. Most SMBs have none of that — and they don't need it. They need a fundamentally different approach: leaner, faster, more pragmatic. Trying to run the enterprise playbook with SMB resources is how you burn through your entire technology budget in one quarter.

The third is treating AI as a project instead of a capability. AI isn't a one-time implementation you "finish." It's an ongoing capability that grows with your business and your data. Companies that treat it as a project — with a start date, an end date, and a handoff — end up with a tool nobody uses six months later. I see this constantly: the AI initiative gets "completed," the vendor moves on, and the internal team quietly reverts to their old process because nobody invested in making the new one stick.

These three mistakes — tool-first thinking, enterprise mimicry, and project mentality — account for the vast majority of failed AI initiatives at companies under 200 people.

The Right Starting Point: Business Problem First

So where do you actually start? With your business, not with technology.

Audit your processes. Walk through your operations and identify the manual, repetitive, data-heavy tasks that eat team hours. Not the glamorous stuff — the boring stuff. The things your team complains about. The spreadsheet someone updates every Monday morning. The report that takes a full day to compile. The inbox that requires someone to read, categorize, and route 200 messages daily. These are the candidates for AI implementation.

Score by impact versus complexity. Think of it as a simple 2x2 grid. High impact and low complexity? Start here. High impact but high complexity? Plan for later, once you've built some capability and confidence. Low impact? Skip it entirely, regardless of how cool the technology looks. This scoring exercise takes an afternoon. It saves months of wasted effort.

Pick the win that saves the most time or money with the least integration risk. Your first AI project should be a clear, measurable win — not a moonshot. You're not trying to transform the company overnight. You're trying to prove that AI can deliver real value in your specific context.

Here's what "doing it right" looks like. A 40-person professional services firm audited their proposal process. They found their team was spending 12 hours on each client proposal — pulling data from their CRM, referencing past projects, writing custom sections, formatting. They piloted an AI drafting tool that had access to their CRM data and previous proposals as shared context. Within six weeks, proposal time dropped to four hours. The quality was consistent. The team actually liked using it because it eliminated the parts of the job they hated. That's not a hypothetical — it's the kind of outcome I see when companies follow this framework instead of chasing the latest AI demo.

Your Data Should Work for You — The Agent Is Just the Tool

This is the insight that separates a real AI strategy from a tool purchase: AI without context produces generic output. That's exactly what happened in the opening story. The tool had no deep understanding of the client's domain, their specific standards, their client history, or their compliance requirements. It was generating content in a vacuum. Of course the output was generic — it had nothing specific to work with.

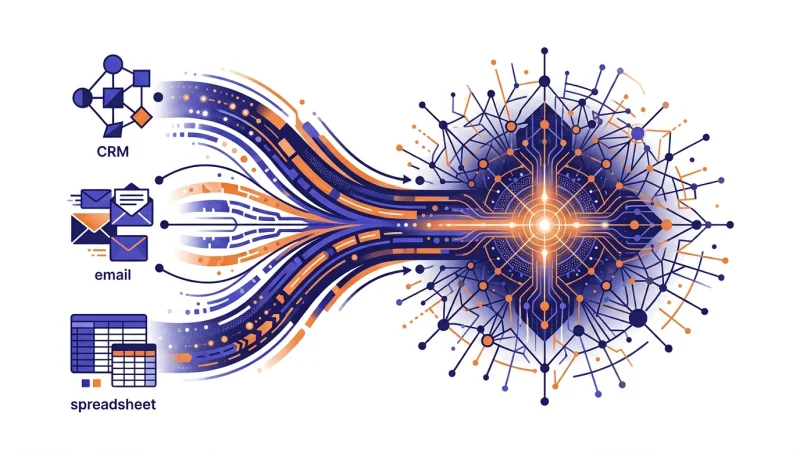

Most SMBs have their data scattered everywhere. Client information lives in the CRM. Project details are in a project management tool. Financial data is in accounting software. Communications are spread across email, Slack, and shared drives. Institutional knowledge lives in people's heads. AI tools can't leverage what they can't access.

Before you pick any AI tool, sort your data house. Map your data sources. Understand what lives where, what format it's in, and how systems connect (or don't). Think about ingestion and transformation — how do you get data from where it is to where AI tools need it?

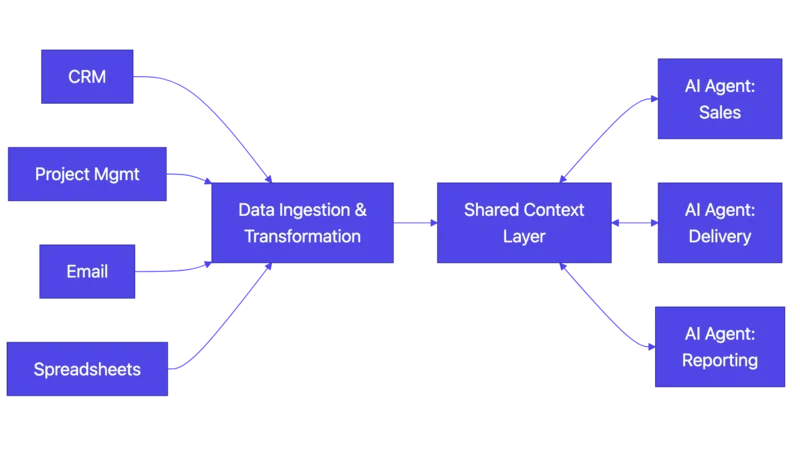

The real unlock is shared, iterative context. Instead of each AI tool starting from zero every time it runs, build a system where each tool in your workflow gathers and creates more context as it works. This spans the entire client journey — from your sales pipeline all the way through delivery. At each step, the context on the client and the project grows. When a new tool or agent needs to do work, it draws from this accumulated knowledge instead of starting cold.

The practical path looks like this: map your data sources, design ingestion pipelines, build an easily traversable structure, and create an interface layer so AI agents and tools can query and append information. This sounds technical — and the implementation is — but the concept is simple. You're building a shared memory for your business that every AI tool can tap into.

This is fundamentally different from "buy a SaaS tool and hope for the best." It's also why having the right technology leadership matters — someone needs to design this architecture, not just plug in tools.

How to Run a 90-Day AI Pilot Without Burning Money

Once you've identified the right problem, sorted your data, and built some context infrastructure, it's time to pilot. Here's how to do it without repeating the $50K mistake.

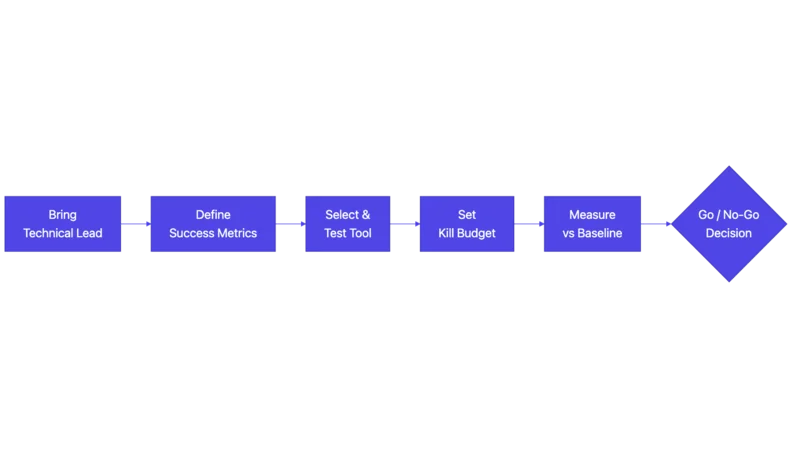

Bring in someone who speaks both languages. You need a technical person at the table who understands both the technology and your business context. Not a vendor selling their tool. Not a consultant who's never shipped anything. Someone who can evaluate what's realistic, spot when a tool doesn't fit, and make the go/no-go call honestly. This is exactly the role a fractional CTO fills — strategic technology leadership without the full-time cost. In Canada, programs like SR&ED and IRAP can offset some of this investment for qualifying AI projects.

Define success metrics before you start. What does "working" look like? Be specific. "Reduce proposal drafting time from 8 hours to 2 hours." "Cut customer response time from 24 hours to 4 hours." "Automate 80% of invoice data entry." Not "improve efficiency." Not "leverage AI capabilities." Measurable outcomes that everyone agrees on before a single dollar is spent.

Start with existing tools — but only after you've done the homework. No custom ML models or training datasets yet. Now that you've defined the problem, mapped your processes, and sorted your data, test whether an off-the-shelf tool solves it at 80%. The opening story's mistake wasn't using an existing tool — it was skipping straight to the tool without doing any of this first.

Set a kill budget. Decide upfront: "We'll spend $X and Y hours. If we don't see Z by then, we stop." This prevents the sunk-cost spiral. When you've spent $30K and the tool isn't delivering, it's psychologically brutal to walk away. Setting the kill budget in advance takes the emotion out of the decision.

Measure against your baseline. The audit from earlier gave you a baseline — how long things take now, what they cost, where the errors happen. Compare relentlessly. Every week, measure the pilot against these numbers.

Set a go/no-go decision point. At the end of the 90 days, make a binary decision based on the metrics you defined. Not feelings. Not "it seems promising." Not "we just need a few more weeks." Data. This is exactly what I did with the client from the opening story: we stopped new investment in the failing tool, set a clear target, and built the right approach — the shared context system — from scratch.

The Tool Only Works If Your Team Uses It

Here's a truth that technology vendors won't tell you: the best AI implementation is worthless if your team reverts to their old process the moment nobody's watching.

Change management for SMBs isn't the enterprise playbook. You don't need a six-month rollout with change champions, steering committees, and a dedicated transformation office. What you need is simpler but just as deliberate: pick one internal advocate — ideally the person who feels the pain of the current process most acutely — train them first, and let them pull others in organically.

Watch for three resistance patterns:

"It's slower than how I do it." This is almost always true in week one and almost always wrong by week four. Set that expectation explicitly. Tell your team: the first two weeks will feel slower. That's normal. If it's still slower after a month, we'll reassess.

"I don't trust the output." Fair. Build in human review checkpoints. Don't ask people to blindly trust AI-generated work — that's unreasonable and, in regulated industries, potentially dangerous. Let them review, correct, and gradually build confidence. Trust is earned, not mandated.

"This will replace me." Reframe it immediately. The person who learns the tool becomes more valuable, not less. They're now the team member who can do in two hours what used to take eight. That's a promotion argument, not a layoff risk.

When your AI tools have access to shared business context — the system from the earlier section — adoption gets dramatically easier. The output quality is higher from day one because the AI actually knows the business. That shrinks the trust gap.

Every successful ERP implementation in history has relied on champions: you identify the power users, make them part of the process, and they drive adoption across the team when you're not in the room. AI is no different.

Ship It Ugly, Fix It Fast

The biggest adoption killer isn't resistance — it's waiting. Waiting until the solution is "ready." Waiting until the output is "perfect." Waiting until you've thought of every edge case. It's never ready. Ship it anyway.

Get the minimum viable version in front of real users doing real work. Collect feedback. Iterate. Repeat. Short cycles — one to two weeks — with real user feedback beat months of internal polishing. Each cycle makes the tool better and builds team buy-in simultaneously. People adopt what they helped shape.

Put the actual users in the room — not just management. This is critical and almost universally ignored. Executive assumptions about how work gets done are almost never accurate. The person clicking the buttons every day knows where the real friction is. Bring them into the iteration loop from day one.

This isn't just about getting better feedback — though you will. It's about ownership. When the people who will use the tool daily help shape it, they become its champions. They're invested in making it work because it's partly theirs. They'll defend it to skeptical colleagues. They'll find workarounds for edge cases you never considered. They'll train the next person voluntarily.

It also surfaces the workflow quirks that no executive summary will ever capture. The gap between "how management thinks the process works" and "how it actually works" is where AI projects go to die. The only way to close that gap is to have the people who do the work in the room while you're building the tools.

When You Don't Need AI

I'll tell you something most AI consultants won't: sometimes you don't need AI at all.

A significant number of prospects come to me asking for "AI to solve this problem." When they describe the problem, it's clearly something that can be solved with a straightforward algorithm. A rules engine. A decision tree. A well-structured database query. Code that can be written — even with AI assistance — in a few days, but doesn't require AI in execution.

Not every automation problem is an AI problem. Sometimes you need a for-loop, not a neural network. A conditional statement, not a large language model. The right answer is often simpler, cheaper, faster to build, and easier to maintain.

A good technology advisor tells you when NOT to use the expensive approach. That's how you know they're working for you, not for their billable hours.

What Strategic AI Adoption Actually Looks Like

Let's go back to the opening story. What would have been different if that agency had followed this framework?

They would have defined the problem first — what specific content, for which use case, measured against what quality standard. They would have audited their processes and found that the real bottleneck wasn't content generation, it was the domain knowledge required to generate it. They would have sorted their data and realized that their institutional knowledge — the compliance standards, the subject matter expertise, the client-specific context — wasn't accessible to any tool. They would have brought in a technical advisor who could evaluate whether an early-stage vendor's tool was capable of meeting their requirements. They would have set a kill budget and a go/no-go point. They would have invested in adoption with real champions from the team. They would have iterated with real users instead of polishing in a boardroom. And they might have discovered that parts of the problem didn't need AI at all.

Instead, they started with the tool and worked backwards. $50,000 and months of team time later, they had generic content nobody could use.

AI strategy isn't about finding the right tool. It's about understanding your business deeply enough to know where AI creates value — and having the discipline to start small, measure honestly, and scale what works.

Want to figure out where AI actually fits in your business? Book a 30-minute call — no pitch, no pressure. Just an honest conversation about what's realistic for your situation.

Written by

Frequently Asked Questions

New Articles

Jul 08, 2025

Unlocking Business Potential with AI-Driven Insights

How AI-driven insights transform business decisions — analyzing data, spotting trends, and predicting outcomes at scale.

Read article

Oct 22, 2025

How Generative AI Will Change the Landscape of Businesses

Discover how generative AI transforms businesses — boosting creativity, efficiency, and personalized customer experiences across industries.

Read article

May 06, 2026

AI Won't Save Your Business. Your Data Will.

Most SMBs waste money on AI by starting with tools instead of problems. A fractional CTO's honest guide to AI strategy that actually works.

Read article