I have seen this pattern dozens of times. A company hires an outsourced development shop to build an application. The shop delivers something. It works, sort of. Then the company tries to add a feature, fix a bug, or scale to more users, and everything falls apart.

The codebase has no tests. No documentation. No consistent architecture. Every developer who touched it solved problems their own way. The code works today, but it is fragile, expensive to change, and impossible to hand off to a new team without significant rework.

If you are reading this, you are probably in this situation right now. This playbook covers the pattern, the assessment, the decision framework, and the recovery process. It is written from the perspective of someone who has done this rescue work repeatedly, most recently with a logistics startup whose entire platform had to be rebuilt from an outsourced codebase.

How This Happens

The pattern is remarkably consistent across industries, company sizes, and vendor types.

Phase 1: The promising start. You hire a development shop. They are responsive, the demos look good, and the project seems to be on track. You do not have a technical person on your side evaluating the architecture decisions being made under the surface.

Phase 2: The scope grows. Requirements evolve, as they always do. The shop accommodates each change, but without a coherent architecture strategy, each addition is bolted on rather than designed in. The codebase grows, but the structure does not.

Phase 3: The handoff. The shop delivers the application. It passes basic acceptance testing. You pay the final invoice. The shop moves on to their next client.

Phase 4: The reality. You need your first major change. The new developer you hired to maintain the code spends two weeks just trying to understand how it works. They estimate the change will take three months instead of the three weeks you expected. Or worse, they tell you the entire approach needs to be rethought.

Why does this keep happening? Development shops are optimized for delivery, not long-term ownership. They take a scope, build it, and hand it off. That is the business model. It is not a criticism. It is just a structural misalignment between what you need (a system that evolves with your business) and what they sell (a project with a start and end date).

The missing piece is almost always the same: nobody on the client side had the technical depth to evaluate architecture decisions in real time. By the time the problems become visible to a non-technical founder, they are already expensive to fix.

Step 1: Do Not Panic. Assess.

The first instinct is usually to start over. Burn it down, hire a new team, build it right this time. That instinct is wrong about 60% of the time. Some codebases are salvageable. Some are not. The only way to know is a structured assessment.

The code review triage takes 1-2 weeks and answers three questions:

Is the architecture sound? A messy codebase with a reasonable underlying architecture is rescuable. A clean-looking codebase with a fundamentally wrong architecture (wrong database model, wrong deployment pattern, wrong technology choice for the problem) is not.

Are there tests? Any tests. Unit tests, integration tests, end-to-end tests. Tests are the clearest signal of code quality. Not because tests prevent bugs, but because code that was written to be testable tends to be structured, modular, and maintainable. Code with zero tests was almost certainly written without these qualities.

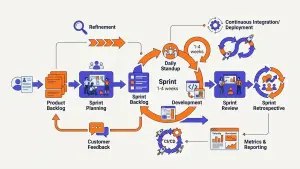

Can the system be deployed reliably? Can you build and deploy the application without the original developer's laptop? Is there a CI/CD pipeline? Can you run the application locally? If deployment depends on tribal knowledge that left with the outsourced team, you have a significant problem independent of code quality.

What you will find

In most failed outsourced projects, the assessment reveals a combination of these issues:

- No separation of concerns (business logic mixed with database queries mixed with UI rendering)

- No error handling or logging (the application works until it does not, and then nobody knows why)

- Hardcoded configuration (API keys, database credentials, environment-specific values embedded in the code)

- No database migrations (the schema was modified directly in production)

- Copy-paste duplication instead of abstraction (the same logic repeated in dozens of places)

- No security practices (SQL injection vulnerabilities, unsanitized user input, exposed secrets)

The severity of these issues determines whether rescue or rewrite is the right path.

Step 2: Decide. Rescue, Rewrite, or Replace.

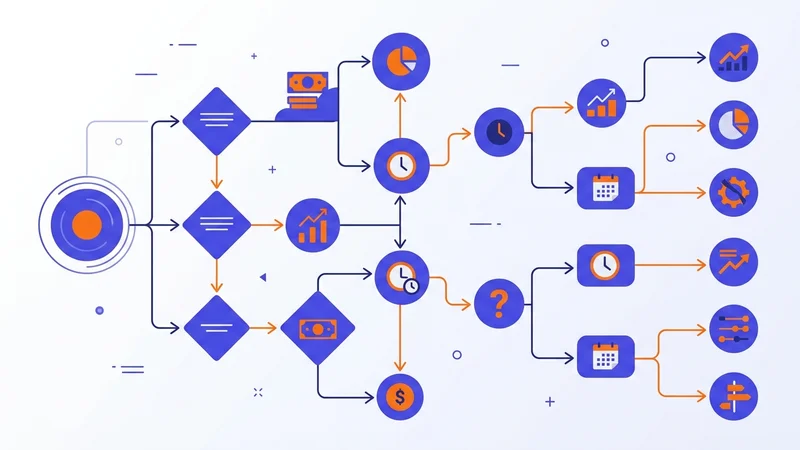

This is the most expensive decision you will make. Getting it wrong costs months and hundreds of thousands of dollars. Here is the framework.

Rescue

Choose rescue when: The architecture is fundamentally sound but the code quality is poor. The database model is correct. The technology stack is appropriate. The problems are in execution, not design.

What rescue looks like: Incrementally improve the codebase while keeping the application running. Add tests around critical paths first. Refactor the worst modules. Implement CI/CD. Fix security vulnerabilities. This typically takes 3-6 months for a medium-complexity application.

Cost: 40-60% of a full rewrite. You preserve the investment in the original build while fixing the structural problems.

Rewrite

Choose rewrite when: The architecture is wrong for the problem. The database model cannot support the features you need. The technology stack was a poor choice. Or the codebase is so tangled that the cost of untangling it exceeds the cost of starting fresh.

What rewrite looks like: Build the new system alongside the old one. Migrate users and data incrementally. Do not attempt a "big bang" cutover. The new system should be in production handling real traffic before the old one is decommissioned.

Cost: 100% of a new build, plus 20-30% overhead for migration, data transfer, and parallel running. Typically 6-12 months for a medium-complexity application.

Replace

Choose replace when: A commercial off-the-shelf product or SaaS platform does 80%+ of what your custom application does. The custom build was unnecessary in the first place. This is more common than founders want to admit.

Cost: License fees plus implementation and data migration. Often the cheapest and fastest option, but only works if a suitable product exists.

The decision matrix

| Signal | Points to Rescue | Points to Rewrite |

|---|---|---|

| Architecture | Sound but poorly executed | Fundamentally wrong for the problem |

| Database model | Correct, just messy | Cannot support required features |

| Technology stack | Appropriate, just poorly used | Wrong choice for the problem |

| Test coverage | Some tests exist, can add more | Zero tests, untestable structure |

| Team understanding | New team can follow the logic | Nobody can explain how it works |

| Timeline pressure | Need to keep shipping now | Can afford 6+ months |

Step 3: The Rescue Process

When rescue is the right call, the process follows a predictable sequence. I will illustrate with a real example.

We took on a logistics startup whose outsourced development team had built their entire last-mile delivery platform. The platform worked for a small number of users but could not scale. The codebase had no tests, no documentation, and no consistent architecture. Multiple developers had worked on it without coordination, each solving problems their own way.

Month 1: Stabilize. Before improving anything, stop the bleeding. Fix the critical bugs that are affecting users right now. Set up monitoring so you know when things break instead of waiting for customer complaints. Implement basic CI/CD so deployments are repeatable.

Month 2-3: Foundation. Add tests around the most critical business logic. Not full coverage. Just the paths that, if broken, would stop the business from operating. Refactor the database access layer so queries are not scattered throughout the codebase. Implement proper error handling and logging.

Month 4-5: Architecture. Now you can start making structural improvements. Extract services where appropriate. Implement proper separation of concerns. Migrate to a scalable infrastructure (in the logistics case, this meant moving from a single server to Kubernetes on AWS EKS).

Month 6+: Features. With a stable, tested, properly architected foundation, the team can now build new features at a sustainable pace. The cost per feature drops dramatically because developers are not fighting the codebase.

The logistics startup went from a fragile outsourced codebase to a production-ready platform running on Kubernetes with a proper engineering team and SDLC in place. The full case study is here.

Step 4: Prevent It From Happening Again

Whether you rescue, rewrite, or replace, the underlying problem was the same: nobody with technical authority was in the room when the architecture decisions were being made. Fix that, and the rest follows.

If you are hiring another vendor: Have a fractional CTO define the architecture before the vendor writes a line of code. The CTO sets the standards, reviews the code weekly, and owns the technical relationship. The vendor builds. The CTO ensures what gets built is maintainable, scalable, and aligned with your business needs.

If you are building an internal team: Hire a senior technical leader before you hire developers. The order matters. Developers without architecture guidance will recreate the same problems you just escaped. The leader does not have to be full-time. A fractional CTO at 10-20 hours per week can set the architecture, define the SDLC, hire the first developers, and create the technical foundation that the team builds on.

If you are going with a SaaS replacement: Have someone technical evaluate the platform against your actual requirements, not the vendor's demo. Check integration capabilities, data export options, and what happens if you need to migrate away in two years. Lock-in is the new version of the outsourced code problem.

The common thread: technology decisions that cost the most to fix are the ones made without qualified technical oversight. A fractional CTO costs $8,000 to $15,000 per month. A failed outsourced project costs $100,000 to $500,000 to recover from. The math is straightforward.

Ready to Assess?

If you are staring at an outsourced codebase that is not working, the first step is an honest assessment. Not a sales pitch. Not a scare tactic. A structured evaluation of what you have, what is salvageable, and what the options actually cost.

We will look at your situation and give you a straight answer about whether rescue, rewrite, or replace is the right path.

Related: Rescuing a Logistics Startup | CTO-Led Software Development | Fractional CTO Services

Written by

Frequently Asked Questions

New Articles

Jun 16, 2025

Programming Languages: The Foundation of Digital Transformation

The role of programming languages in digital transformation — how different languages power applications across industries.

Read article

Jun 24, 2025

Unlocking Efficiency with DevOps

How DevOps integrates development and operations to deliver software faster with better quality, security, and reliability.

Read article

Jun 26, 2025

Unlocking Efficiency with Agile Methodologies

How Agile methodologies improve project delivery through collaboration, flexibility, and continuous improvement. Key frameworks explained.

Read article